How Node.js Works Under the Hood: V8 Engine, libuv, and Node.js Bindings

Most developers use Node.js every day without thinking much about what is happening beneath their JavaScript code. You write fs.readFile, your callback fires, your server stays responsive — and it all just works. But understanding why it works the way it does makes you a significantly better Node.js developer. It changes how you write code, how you diagnose performance problems, and how you reason about concurrency.

This post is a deep dive into the three core components that make Node.js what it is: the V8 engine, libuv, and Node.js bindings. We will look at each one in detail with real, runnable JavaScript examples throughout.

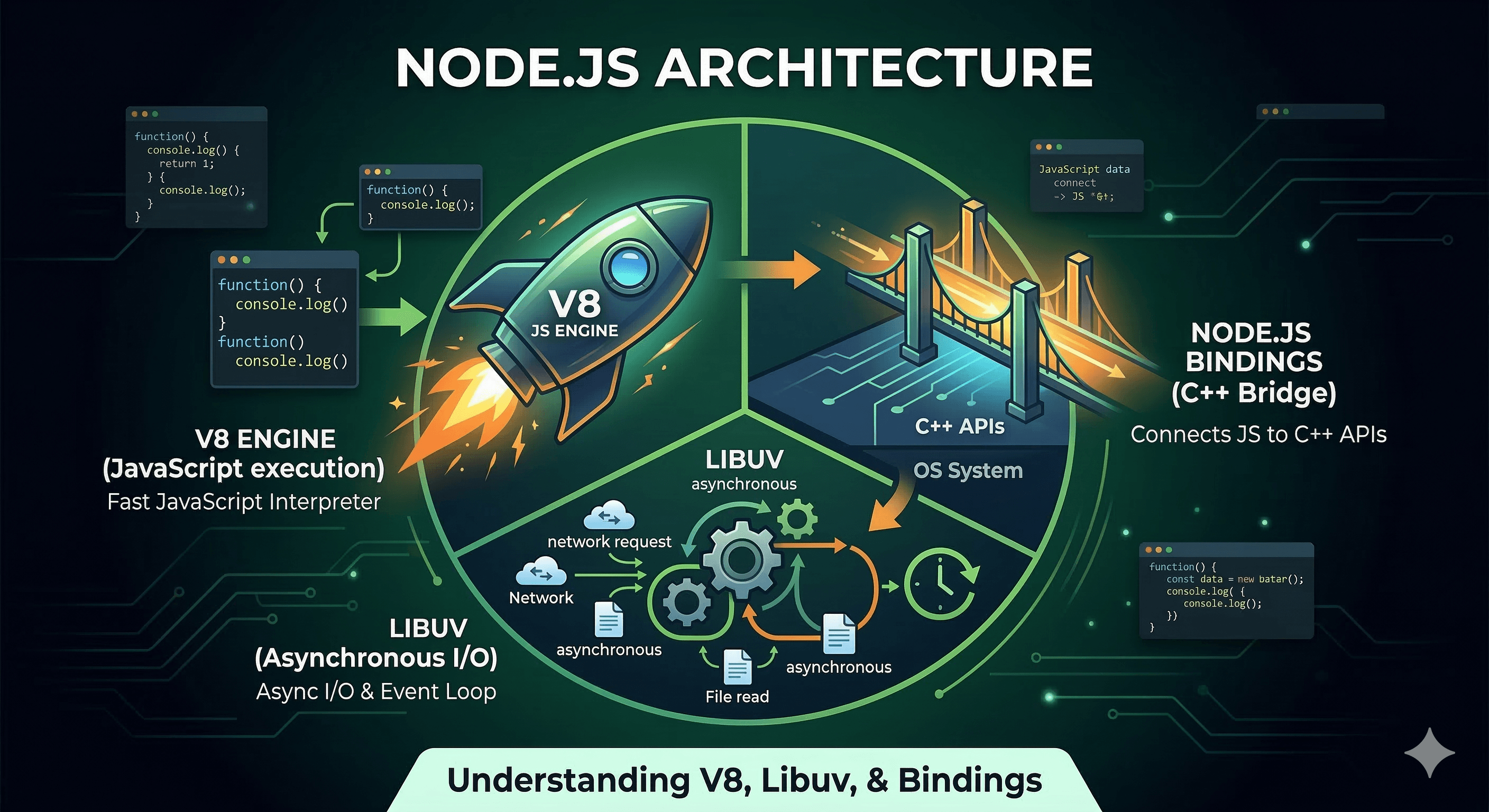

The Architecture at a Glance

Before going deep, here is the broad picture. When you run a Node.js program, your JavaScript passes through three distinct layers:

Your JavaScript Code

|

v

┌───────────────────────────────────────────┐

│ V8 Engine │

│ Parses, compiles, and executes your JS │

│ Manages heap memory and garbage collection│

└───────────────────────────────────────────┘

|

v

┌───────────────────────────────────────────┐

│ Node.js Bindings (C++) │

│ Bridge between JavaScript and C++ world │

│ Exposes fs, crypto, Buffer, process ... │

└───────────────────────────────────────────┘

|

v

┌───────────────────────────────────────────┐

│ libuv │

│ Handles all async I/O, timers, threads │

│ Runs the event loop │

│ Abstracts OS differences (Linux/Mac/Win) │

└───────────────────────────────────────────┘

|

v

Operating System

(epoll / kqueue / IOCP)

Each layer has a clear, distinct job. V8 runs JavaScript. libuv handles everything asynchronous. Node.js bindings are the glue between the two. Let's go through each in detail.

Part 1: The V8 Engine

What V8 Is

V8 is Google's open-source JavaScript engine, written in C++. It was originally built for Google Chrome and is now also the engine that powers Node.js. You can check its version from within any Node process:

console.log(process.versions.v8); // "12.4.254.21-node.33"

console.log(process.versions.node); // "22.22.0"

V8's job is to take your JavaScript source code — which is just text — and turn it into machine code that the processor can actually execute.

How V8 Executes JavaScript: The Pipeline

V8 does not simply interpret your code line by line. It uses a multi-stage pipeline:

Step 1 — Parsing

V8 reads your source code and builds an Abstract Syntax Tree (AST). The AST is a structured representation of your program that is easier for a compiler to work with than raw text.

// V8 parses this into an AST internally

function greet(name) {

return "Hello, " + name;

}

Step 2 — Ignition (Bytecode Interpreter)

V8's interpreter, called Ignition, compiles the AST into bytecode — a compact, platform-independent instruction set. This happens very quickly, which means your code starts executing fast even before any optimization happens.

Step 3 — TurboFan (JIT Compiler)

As your code runs, V8 profiles it. Functions that are called frequently — "hot" functions — are handed off to TurboFan, V8's optimizing JIT (Just-In-Time) compiler. TurboFan analyzes the types and shapes of data flowing through these hot paths and compiles them into highly optimized native machine code.

The result is dramatic. Here is a concrete demonstration:

const { performance } = require("perf_hooks");

// V8 identifies this as a hot function and JIT-compiles it

function add(a, b) {

return a + b;

}

const start = performance.now();

let result = 0;

for (let i = 0; i < 10_000_000; i++) {

result += add(i, i + 1); // Called 10 million times — TurboFan kicks in

}

const end = performance.now();

console.log("Result:", result);

console.log("Time for 10M iterations:", (end - start).toFixed(2), "ms");

// Output: Time for 10M iterations: ~104ms

// Pure interpreted code would take many seconds for the same work

Deoptimization

V8's optimizations make assumptions. If those assumptions break — for example, if a function that always received numbers suddenly receives a string — V8 "deoptimizes" back to bytecode and may re-optimize with updated assumptions. This is why writing type-consistent code in hot paths is important for performance.

function multiply(a, b) {

return a * b;

}

// V8 optimizes this for numbers

for (let i = 0; i < 1_000_000; i++) {

multiply(i, i + 1);

}

// Suddenly passing a string forces deoptimization

multiply("oops", 2); // V8 has to bail out of the optimized version

V8 Heap and Garbage Collection

V8 manages memory in a region called the heap. When you create objects, arrays, strings, and closures, they are allocated on the heap. V8's garbage collector automatically reclaims memory that is no longer reachable.

const v8 = require("v8");

// Check memory before any allocation

const before = process.memoryUsage().heapUsed;

console.log("Heap before:", (before / 1024 / 1024).toFixed(2), "MB");

// Allocate 1 million strings — all go onto the V8 heap

const bigArray = new Array(1_000_000).fill("V8 manages this memory");

const after = process.memoryUsage().heapUsed;

console.log("Heap after 1M-element array:", (after / 1024 / 1024).toFixed(2), "MB");

console.log("Heap increased by:", ((after - before) / 1024 / 1024).toFixed(2), "MB");

// Inspect all heap spaces V8 uses internally

const spaces = v8.getHeapSpaceStatistics();

spaces.forEach((space) => {

if (space.space_used_size > 0) {

const usedKB = (space.space_used_size / 1024).toFixed(1);

console.log(` \({space.space_name}: \){usedKB} KB used`);

}

});

// Output:

// Heap before: 4.21 MB

// Heap after 1M-element array: 11.85 MB

// Heap increased by: 7.64 MB

// new_space: 458.3 KB used

// old_space: 2760.1 KB used

// code_space: 61.9 KB used

// large_object_space: 7812.5 KB used

V8 divides the heap into spaces for different lifetimes of objects. Short-lived objects start in new_space (the young generation). Objects that survive multiple garbage collection cycles get promoted to old_space (the old generation). This generational approach keeps most garbage collection cycles short and cheap.

V8 Serialization

V8 also provides serialization utilities, which Node.js exposes directly:

const v8 = require("v8");

// Serialize any JavaScript value to a Buffer using V8's internal format

const original = {

name: "Arjun",

scores: [95, 87, 92],

meta: { active: true },

};

const serialized = v8.serialize(original);

const deserialized = v8.deserialize(serialized);

console.log("Original:", original);

console.log("Serialized byte length:", serialized.length); // 58 bytes

console.log("Deserialized:", deserialized);

console.log("Match:", JSON.stringify(original) === JSON.stringify(deserialized)); // true

This is faster than JSON.stringify / JSON.parse because V8's native format is binary and does not need to convert everything to string form.

Checking V8 Heap Stats in Production

const v8 = require("v8");

function printHeapStats() {

const stats = v8.getHeapStatistics();

console.log("--- V8 Heap Statistics ---");

console.log("Total heap size: ", (stats.total_heap_size / 1024 / 1024).toFixed(2), "MB");

console.log("Used heap size: ", (stats.used_heap_size / 1024 / 1024).toFixed(2), "MB");

console.log("Heap size limit: ", (stats.heap_size_limit / 1024 / 1024).toFixed(2), "MB");

console.log("External memory: ", (stats.external_memory / 1024 / 1024).toFixed(2), "MB");

console.log("Malloced memory: ", (stats.malloced_memory / 1024 / 1024).toFixed(2), "MB");

}

printHeapStats();

// --- V8 Heap Statistics ---

// Total heap size: 6.60 MB

// Used heap size: 4.19 MB

// Heap size limit: 2096.00 MB

// External memory: 1.51 MB

// Malloced memory: 0.10 MB

Monitoring these values over time is a good way to detect memory leaks in production — if used_heap_size grows without bound, something is holding references it should not.

Part 2: libuv

What libuv Is

libuv is a cross-platform C library originally written for Node.js. Its purpose is to provide asynchronous I/O across different operating systems using a uniform API. Node.js includes it, and you can check its version:

console.log(process.versions.uv); // "1.51.0"

On Linux, libuv uses epoll for I/O. On macOS, it uses kqueue. On Windows, it uses IOCP (I/O Completion Ports). As a Node.js developer you never deal with any of this directly — libuv abstracts it all away.

The Event Loop

The event loop is the heartbeat of every Node.js process. It is implemented inside libuv and runs continuously as long as there is work to do. The loop has distinct phases, each responsible for a different kind of deferred work:

┌────────────────────────────────────────┐

│ timers │

│ setTimeout, setInterval callbacks │

└────────────────┬───────────────────────┘

│

┌────────────────▼───────────────────────┐

│ pending callbacks │

│ I/O error callbacks from prev iter │

└────────────────┬───────────────────────┘

│

┌────────────────▼───────────────────────┐

│ idle, prepare │

│ Internal use only │

└────────────────┬───────────────────────┘

│

┌────────────────▼───────────────────────┐

│ poll │

│ Retrieve new I/O events │

│ Execute I/O callbacks (fs, net, etc.) │

└────────────────┬───────────────────────┘

│

┌────────────────▼───────────────────────┐

│ check │

│ setImmediate callbacks │

└────────────────┬───────────────────────┘

│

┌────────────────▼───────────────────────┐

│ close callbacks │

│ socket.on('close', ...) etc. │

└────────────────┬───────────────────────┘

│

└──► back to timers

You can observe the phase order directly:

console.log("1. Synchronous code runs first — not part of the event loop");

// Goes to the 'check' phase

setImmediate(() => {

console.log("4. setImmediate — check phase");

});

// Goes to the 'timers' phase

setTimeout(() => {

console.log("3. setTimeout 0ms — timers phase");

}, 0);

// process.nextTick is NOT a libuv phase — it runs between every phase transition

process.nextTick(() => {

console.log("2. process.nextTick — runs before any I/O or timers");

});

console.log("1. Synchronous code ends");

// Output order:

// 1. Synchronous code runs first — not part of the event loop

// 1. Synchronous code ends

// 2. process.nextTick — runs before any I/O or timers

// 4. setImmediate — check phase

// 3. setTimeout 0ms — timers phase

The process.nextTick queue is drained between every phase transition, before the event loop moves to the next phase. This makes it fire before setTimeout or setImmediate, even though it was registered after them.

The Thread Pool

One of libuv's most important features is its thread pool — a set of worker threads (default: 4) that handle operations which cannot be made truly async at the OS level. This includes:

DNS lookups (

dns.lookup)File system operations (

fs.readFile,fs.writeFile, etc.)Cryptographic operations (

crypto.pbkdf2,crypto.scrypt, etc.)Compression (

zlib)

These operations run on a background thread, so the main JavaScript thread is never blocked. When they complete, libuv puts the callback into the event loop queue, and V8 executes it.

const crypto = require("crypto");

const { performance } = require("perf_hooks");

console.log("Thread pool size:", process.env.UV_THREADPOOL_SIZE || 4);

const start = performance.now();

let completed = 0;

// Launch 4 CPU-intensive crypto tasks simultaneously

// Each runs on a separate thread in libuv's thread pool

for (let i = 0; i < 4; i++) {

crypto.pbkdf2("password" + i, "salt" + i, 10000, 64, "sha512", (err, key) => {

completed++;

const elapsed = (performance.now() - start).toFixed(0);

console.log(`pbkdf2 task \({i} finished at \){elapsed}ms`);

if (completed === 4) {

console.log("All 4 tasks done in", (performance.now() - start).toFixed(0), "ms");

console.log("(They ran in parallel — not one after the other)");

}

});

}

// This line runs immediately, proving the main thread was never blocked

console.log("Main thread is free. Tasks are running in the background.\n");

// Output:

// Main thread is free. Tasks are running in the background.

// pbkdf2 task 0 finished at 15ms

// pbkdf2 task 1 finished at 15ms

// pbkdf2 task 2 finished at 15ms

// pbkdf2 task 3 finished at 20ms

// All 4 tasks done in 20ms

// (They ran in parallel — not one after the other)

All four tasks complete in roughly the same time as one would alone, because they run in parallel on four threads. If you had five tasks with the default pool size of 4, the fifth would wait until a thread became free.

You can increase the thread pool size before the event loop starts:

// Set this before requiring anything that uses the thread pool

process.env.UV_THREADPOOL_SIZE = "8"; // Up to 128 threads

const crypto = require("crypto");

console.log("Thread pool expanded to:", process.env.UV_THREADPOOL_SIZE);

Blocking the Event Loop — The Cardinal Sin

Since Node.js has a single JavaScript thread, any synchronous code that takes a long time will block the event loop and delay all other work, including incoming HTTP requests.

const { performance } = require("perf_hooks");

// Schedule a callback for 50ms from now

setTimeout(() => {

// This should fire at ~50ms, but it will be delayed

console.log("Timer fired at:", performance.now().toFixed(0), "ms");

}, 50);

console.log("Blocking the main thread for 200ms...");

// Busy-waiting — this freezes the event loop completely

const blockUntil = performance.now() + 200;

while (performance.now() < blockUntil) {

// spinning

}

console.log("Block ended at:", performance.now().toFixed(0), "ms");

// Output:

// Blocking the main thread for 200ms...

// Block ended at: 231ms

// Timer fired at: 232ms <-- should have been 50ms, delayed by 182ms

The timer that should have fired at 50ms does not fire until 232ms because libuv cannot process anything while the JavaScript thread is stuck spinning. This is why CPU-intensive work in Node.js should always be moved to worker threads or a separate process.

Network I/O — Non-blocking by Default

Unlike file system operations (which use the thread pool), network I/O is handled by the OS through kernel-level mechanisms (epoll/kqueue/IOCP), so it does not use thread pool slots at all.

const http = require("http");

const { performance } = require("perf_hooks");

// A simple server — libuv watches the socket via epoll/kqueue

// It uses zero thread pool slots for accepting and reading connections

const server = http.createServer((req, res) => {

res.writeHead(200, { "Content-Type": "text/plain" });

res.end("Hello from libuv-powered server\n");

});

server.listen(3000, () => {

console.log("Server listening — libuv watching the socket via OS kernel");

console.log("Thread pool untouched — this is pure kernel-level async I/O");

server.close(); // close immediately for demo purposes

});

Part 3: Node.js Bindings

What Bindings Are

Node.js bindings are the bridge between JavaScript and C++. They allow JavaScript code to call into C++ functions that interact with the operating system, hardware, or native libraries — things that pure JavaScript cannot do.

When you write require('fs') or require('crypto'), the module you get back is largely a thin JavaScript wrapper around a C++ binding that V8 exposes to your code.

The binding layer uses V8's C++ API to register C++ functions as JavaScript functions, so that when you call fs.readFile(...) from JavaScript, V8 dispatches into compiled C++ code that makes actual system calls.

The process Object — A Core Binding

The process object you use constantly is itself a C++ binding:

// process is built in C++ and exposed to JS via V8's binding API

console.log(process.pid); // OS process ID — from getpid() syscall

console.log(process.platform); // "linux", "darwin", "win32"

console.log(process.arch); // "x64", "arm64", etc.

console.log(process.version); // "v22.22.0"

console.log(process.uptime()); // seconds since process started — from C++ timer

const mem = process.memoryUsage();

console.log("RSS: ", (mem.rss / 1024 / 1024).toFixed(2), "MB"); // Resident Set Size

console.log("Heap Total: ", (mem.heapTotal / 1024 / 1024).toFixed(2), "MB");

console.log("Heap Used: ", (mem.heapUsed / 1024 / 1024).toFixed(2), "MB");

console.log("External: ", (mem.external / 1024 / 1024).toFixed(2), "MB"); // C++ memory outside heap

const cpu = process.cpuUsage();

console.log("CPU user: ", cpu.user, "microseconds");

console.log("CPU system:", cpu.system, "microseconds");

// Output:

// process.pid: 58

// process.platform: linux

// process.arch: x64

// RSS: 126.08 MB

// Heap Total: 5.35 MB

// Heap Used: 4.17 MB

// External: 1.51 MB

// CPU user: 30000 microseconds

// CPU system: 10000 microseconds

Every one of these values comes from C++ code that calls OS system APIs. JavaScript itself has no way to get the process ID or memory usage — the binding provides that access.

The os Module — Binding to OS APIs

const os = require("os");

// These all call into C++ bindings which call OS APIs

console.log("CPU cores: ", os.cpus().length);

console.log("Total memory: ", (os.totalmem() / 1024 / 1024 / 1024).toFixed(2), "GB");

console.log("Free memory: ", (os.freemem() / 1024 / 1024 / 1024).toFixed(2), "GB");

console.log("Hostname: ", os.hostname());

console.log("OS type: ", os.type()); // "Linux", "Darwin", "Windows_NT"

console.log("Load average: ", os.loadavg()); // [1min, 5min, 15min]

// Network interfaces — directly from OS

const nets = os.networkInterfaces();

Object.entries(nets).forEach(([name, addrs]) => {

addrs.forEach((addr) => {

if (addr.family === "IPv4" && !addr.internal) {

console.log(`Interface \({name}: \){addr.address}`);

}

});

});

Buffer — Memory Outside the V8 Heap

Buffer is one of the most important bindings in Node.js. It allocates memory outside the V8 heap in C++ land, which is critical for I/O-heavy applications. Because it sits outside the heap, it is not subject to V8's garbage collector pressure, and it can be handed directly to kernel system calls without copying.

// Allocate 1MB outside the V8 heap

const buf = Buffer.allocUnsafe(1024 * 1024);

const mem = process.memoryUsage();

// Notice: this shows up as 'external', not heapUsed

console.log("Heap used: ", (mem.heapUsed / 1024 / 1024).toFixed(2), "MB");

console.log("External (C++):", (mem.external / 1024 / 1024).toFixed(2), "MB");

// Buffer operations are C++ under the hood

buf.fill(65); // fills with byte 65 (ASCII 'A') using memset() in C++

console.log("buf[0] as char:", String.fromCharCode(buf[0])); // 'A'

console.log("buf.toString(0-5):", buf.toString("utf8", 0, 5)); // 'AAAAA'

// Encoding between string and binary — C++ binding

const str = "Hello, Node.js Bindings!";

const encoded = Buffer.from(str, "utf8");

console.log("\nOriginal string:", str);

console.log("Hex encoding:", encoded.toString("hex")); // 48656c6c6f...

console.log("Base64 encoding:", encoded.toString("base64")); // SGVsbG8s...

console.log("Byte length:", encoded.length); // 24

const decoded = encoded.toString("utf8");

console.log("Decoded back:", decoded); // "Hello, Node.js Bindings!"

The fs Module — Binding to File System Syscalls

const fs = require("fs");

const { performance } = require("perf_hooks");

// Synchronous version — calls C++ binding which calls the OS read() syscall

// Blocks the event loop until the file is read

const start1 = performance.now();

const data = fs.readFileSync("/tmp/test-node.txt", "utf8");

console.log("Sync read took:", (performance.now() - start1).toFixed(2), "ms");

console.log("Bytes read:", Buffer.byteLength(data));

// Asynchronous version — C++ binding hands the work to libuv thread pool

// Returns immediately, fires callback when OS completes the read

const start2 = performance.now();

fs.readFile("/tmp/test-node.txt", "utf8", (err, content) => {

if (err) throw err;

console.log("Async read completed in:", (performance.now() - start2).toFixed(2), "ms");

console.log("Bytes read:", Buffer.byteLength(content));

});

console.log("fs.readFile returned — main thread not blocked");

// Promise-based version (Node 10+) — same binding, promise wrapper on top

const fsPromises = require("fs").promises;

async function readWithPromise() {

const start3 = performance.now();

const content = await fsPromises.readFile("/tmp/test-node.txt", "utf8");

console.log("Promise read completed in:", (performance.now() - start3).toFixed(2), "ms");

}

readWithPromise();

N-API — Writing Your Own Bindings

Node.js exposes a stable C API called N-API (now called Node-API) for building native add-ons. If you have ever used a package like bcrypt, sharp, canvas, or sqlite3, you have used a package that contains a native binding built with N-API or its predecessor nan.

Here is what a minimal native binding looks like conceptually (this is C++ code, shown for illustration):

// hello.cc — a native Node.js addon using Node-API

#include <node_api.h>

// This C++ function is what JavaScript calls as require('./hello').hello()

napi_value Hello(napi_env env, napi_callback_info info) {

napi_value greeting;

// napi_create_string_utf8 creates a JS string from C++

napi_create_string_utf8(env, "Hello from C++!", NAPI_AUTO_LENGTH, &greeting);

return greeting;

}

// Register the function so it is visible to JavaScript

NAPI_MODULE_INIT() {

napi_value fn;

napi_create_function(env, "hello", NAPI_AUTO_LENGTH, Hello, nullptr, &fn);

napi_set_named_property(env, exports, "hello", fn);

return exports;

}

On the JavaScript side, after building:

const addon = require("./build/Release/hello");

console.log(addon.hello()); // "Hello from C++!"

You do not need to write native addons yourself in most cases, but understanding that they exist explains why installing packages like bcrypt compiles C++ code during npm install — it is building the binding for your specific platform.

How All Three Work Together

Here is a complete example that shows V8, libuv, and bindings all participating in a single operation:

const fs = require("fs");

const { performance } = require("perf_hooks");

// Write a test file first

fs.writeFileSync("/tmp/demo.txt", "Node.js internals demo\n".repeat(500));

const start = performance.now();

console.log("[V8] Parsed and JIT-compiled this script");

console.log("[Main thread] Calling fs.readFile...");

// Step 1: Your JS code calls fs.readFile (JavaScript layer, parsed by V8)

fs.readFile("/tmp/demo.txt", "utf8", (err, data) => {

// Step 5: V8 executes this callback when the event loop delivers it

if (err) throw err;

const elapsed = (performance.now() - start).toFixed(2);

console.log("[V8] Callback executed by V8 at", elapsed + "ms");

console.log("[Result] File size:", data.length, "chars");

console.log("[Result] First line:", data.split("\n")[0]);

});

// Step 2: Node.js binding (C++) receives the call

// Step 3: Binding hands the work to libuv

// Step 4: libuv queues the file read on a thread pool worker,

// OS reads the file, worker completes, libuv puts callback

// in the event loop's poll phase queue

console.log("[Bindings] Work handed to libuv");

console.log("[libuv] File read running on thread pool worker");

console.log("[Main thread] Free to do other work\n");

// Output:

// [V8] Parsed and JIT-compiled this script

// [Main thread] Calling fs.readFile...

// [Bindings] Work handed to libuv

// [libuv] File read running on thread pool worker

// [Main thread] Free to do other work

//

// [V8] Callback executed by V8 at 1.45ms

// [Result] File size: 11500 chars

// [Result] First line: Node.js internals demo

The flow is always: your JavaScript → V8 compiles and runs it → binding bridges to C++ → libuv handles the async work → OS does the actual I/O → libuv puts the result in the event loop → V8 executes your callback.

Summary

| Component | Language | Responsibility |

|---|---|---|

| V8 | C++ | Parse, compile, and execute JavaScript. Manage heap and GC. |

| libuv | C | Event loop, async I/O, timers, thread pool, OS abstraction. |

| Node.js Bindings | C++ | Bridge JS to C++ APIs. Expose fs, crypto, Buffer, process, os, etc. |

A few things worth keeping in mind as you write Node.js code:

V8 optimizes hot code paths — writing type-consistent code in tight loops makes a real difference.

The event loop runs on a single thread — any synchronous code that takes long blocks everything else.

libuv's thread pool is finite — the default size is 4. CPU-intensive async operations like

crypto.pbkdf2compete for those slots.Buffer memory lives outside the V8 heap — it does not count against your

--max-old-space-sizelimit and is managed in C++ land.Every built-in module is a binding —

fs,net,crypto,dns,zlib,os, andchild_processall delegate to C++ at their core.