Cache Strategies in Distributed Systems

The Avengers of Backend Engineering — Assembled Against the Thundering Herd

System Design Cohort · March 8, 2026

18 min read · #DistributedSystems #Caching #SystemDesign

Caching TTL Jitter SWR Mutex Cache Warming Thundering Herd

🏏

Live Scenario — Today, 7:00 PM

India vs New Zealand T20 Final on JioHotstar. 300 million fans. One platform. One cache layer standing between epic cricket and a catastrophic outage. This is the story of the heroes that save it.

It's 6:55 PM. Rohit Sharma is walking to the toss. Your phone is open. 300 million other phones are open. In that single moment, JioHotstar's distributed systems face what the database engineering team quietly calls the most dangerous five seconds of the year.

If caches are cold, the database sees an avalanche it was never designed to handle. If caches expire together, a different kind of avalanche hits — the Thundering Herd. And if nobody thought about this? You get buffering screens. Rage tweets. A PR catastrophe at the worst possible moment.

This is not a horror story. This is the story of how great distributed systems are engineered — using five battle-tested cache strategies that act as Avengers-level protection for high-traffic systems.

Suit up. Let's go.

origin story

ISSUE #01

Why Basic TTL Caching

Isn't Enough

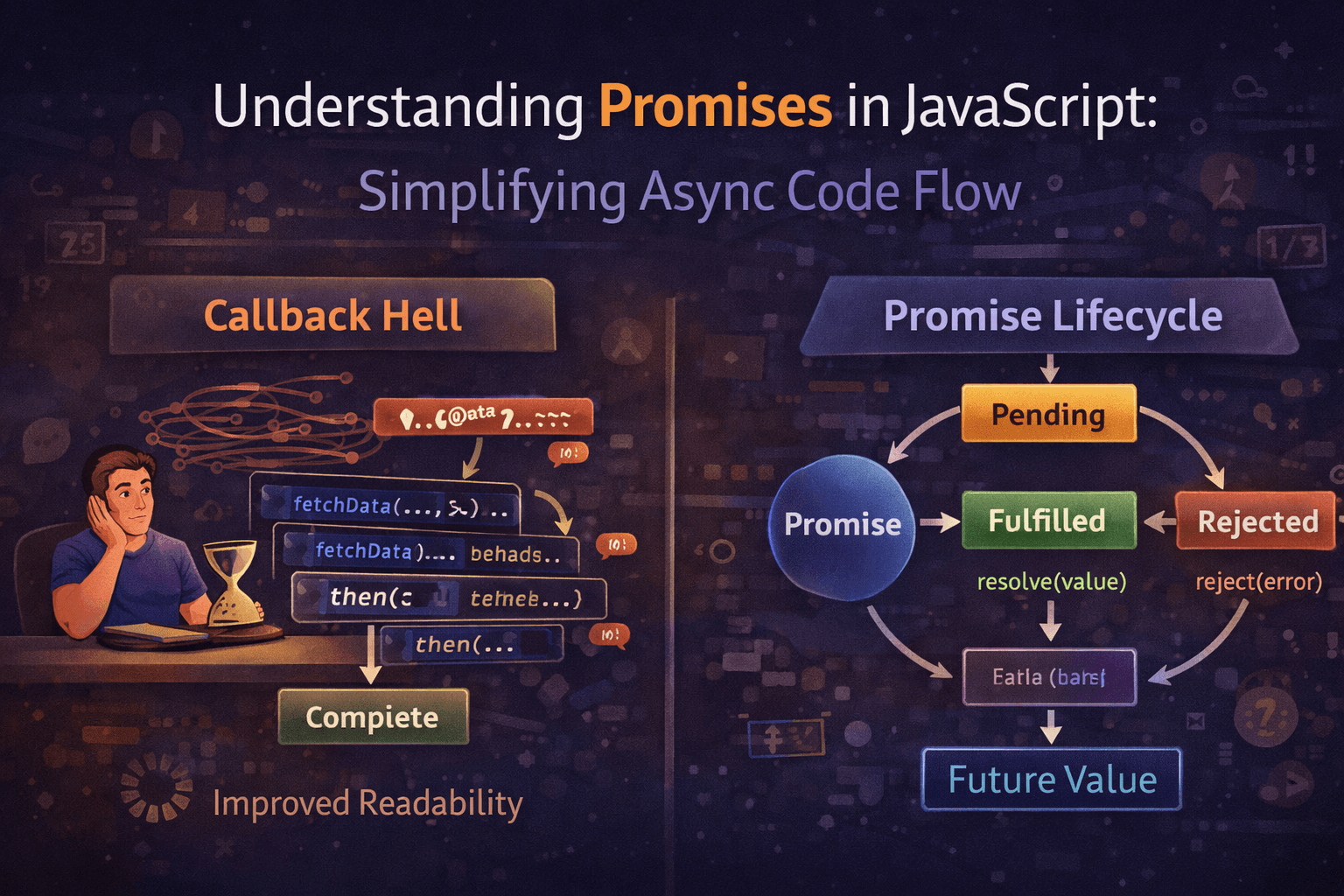

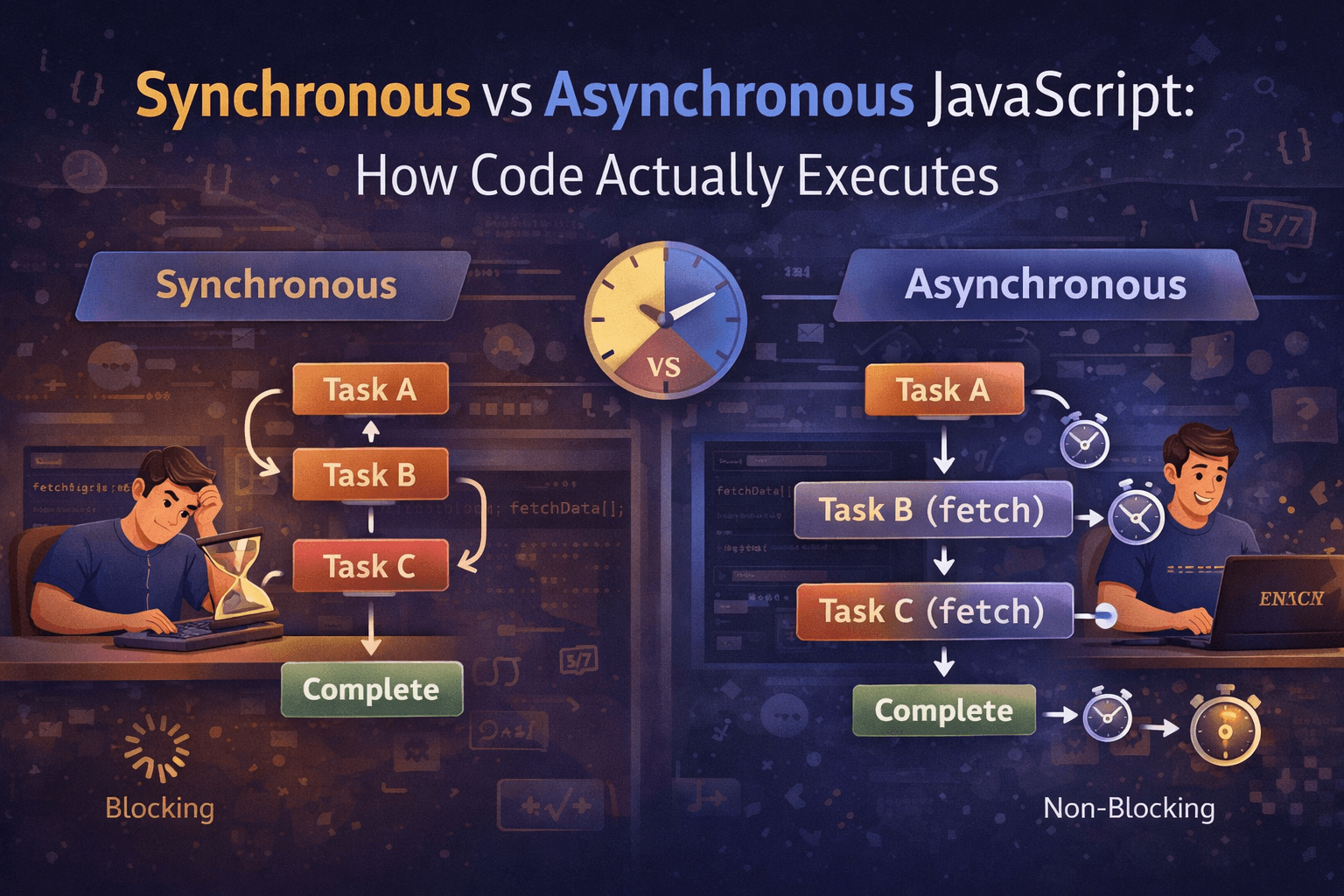

Every distributed system story starts the same way. Your app is growing. Database queries are slow. Someone says: "Let's cache it." You store data in Redis with a TTL — a fixed expiry time. When the TTL runs out, the key evicts. The next request recomputes and refills it.

Clean. Simple. Wrong — at scale.

Basic TTL works beautifully for low-traffic, spread-out use cases. The fatal flaw reveals itself only under coordinated, synchronized load — which is exactly what every JioHotstar match creates. Millions of users open the app at the same time. They all fetch the same cached keys. Those keys were all cached around the same time. And they all expire at exactly the same time.

⚡ The Core Problem Basic TTL assumes cache misses are rare and spread out. But in distributed systems under synchronized load, they cluster together — creating a burst of simultaneous recomputation that overwhelms the origin database.

This is the origin story of the Thundering Herd — and it's the villain every strategy in this article is designed to defeat.

the villain

ISSUE #02

Cache Expiry & Traffic Spikes:

The Thundering Herd

Here's the scenario in precise terms. JioHotstar sets TTL = 300 seconds for match scorecard data. At 6:55 PM, all 50 app servers cached this data. At 7:00:00 PM sharp, every cached key expires simultaneously. Every one of those 300 million requests hits the origin database at the same moment.

Your database handles 10,000 queries/second on a good day. It's just been asked for 2,000,000 queries/second.

Synchronized TTL Expiry — The Villain's Plan

Time → 6:55:00 PM 7:00:00 PM

──────────────────────────────────────────────────────────

Key A: [■■■■■■■■■■■■■■■■■■] EXPIRED ← 50M requests hit DB

Key B: [■■■■■■■■■■■■■■■■■■] EXPIRED ← 80M requests hit DB

Key C: [■■■■■■■■■■■■■■■■■■] EXPIRED ← 60M requests hit DB

Key D: [■■■■■■■■■■■■■■■■■■] EXPIRED ← 110M requests hit DB

↓

ALL HIT DATABASE SIMULTANEOUSLY

DB Load: 2,000,000 queries/sec 💥 CRASH

This failure mode has a name: Cache Stampede. It's been responsible for outages at Netflix, Amazon, Twitter, and countless others. It's not a bug in your code — it's an architectural oversight in how you set up your caching layer.

Now meet the heroes.

heroes assemble

HERO #01 — CAPTAIN CHAOS

TTL Jitter: The Randomizer

Power: Spreading recomputation load across time instead of a single moment

TTL Jitter is the simplest, most universally applicable defense against the Thundering Herd. Instead of setting all cache keys to expire at the same fixed TTL, you add a random offset to each entry's expiration time.

Keys that were cached together now expire at slightly different times — spreading the recomputation load across a window instead of a single millisecond.

Formula

TTL_actual = base_TTL + random(0, jitter_window)

For JioHotstar's scorecard cache:

base_TTL = 300s, jitter = random(0, 60s)

Result: Keys expire between 300s and 360s — spread over a full minute, turning a 2M QPS spike into a manageable 33K QPS trickle.

Jittered TTL — Hero's Defense

Time → 7:00:00 7:00:12 7:00:24 7:00:36 7:00:48 7:01:00

──────────────────────────────────────────────────────────────

Key A: ↑ expires (300 + 12s)

Key B: ↑ expires (300 + 24s)

Key C: ↑ expires (300 + 36s)

Key D: ↑ expires (300 + 48s)

DB Load: ~33,000 queries/sec (spread, manageable) ✅

vs. 2,000,000 queries/sec with synchronised TTL

Jitter alone can reduce peak database load by 80–90%. It's the first line of defense and costs almost nothing to implement. But it doesn't eliminate stampedes entirely — individual hot keys still cause spikes on expiry. That's where the next heroes come in.

HERO #02 — DOCTOR STRANGE

Probabilistic Early Re-computation (PER)

Power: Seeing the future — refreshing the cache before it even expires

What if your cache could detect, before an entry expires, that it's about to expire — and refresh it early? That's the idea behind Probabilistic Early Re-computation, also known as XFetch.

As a cache entry approaches its expiration time, each incoming request has an increasing probability of triggering a background recomputation — even before the TTL hits zero. The closer to expiry, the higher the chance. By the time the TTL actually runs out, the cache has almost certainly been refreshed already.

⚡ The Prophet's Logic

Imagine the scorecard has 30 seconds left. At T-30s → 10% refresh chance. At T-15s → 40%. At T-5s → 80%. The stampede never happens because someone always refreshed it early. Doctor Strange has already seen the future.

Probabilistic Early Expiration Timeline

TTL Remaining: 60s 45s 30s 15s 0s

────────────────────────────────────────────────────────────

Refresh Prob: 2% 10% 25% 60% 100%

Standard: ────────────────────────────────────── EXPIRE 💥

PER: ────────────────── ↑ Background Refresh ─── Never Expires

(single worker refreshes silently)

JioHotstar uses this for live match state — current score, overs bowled, run rate. As the cached snapshot ages, background workers are increasingly likely to refresh it. By the time the next 50 million requests arrive, the data is fresh and the cache is already warm.

HERO #03 — NICK FURY

Mutex / Cache Locking: The Gatekeeper

Power: Only one request allowed through the door. Everyone else waits.

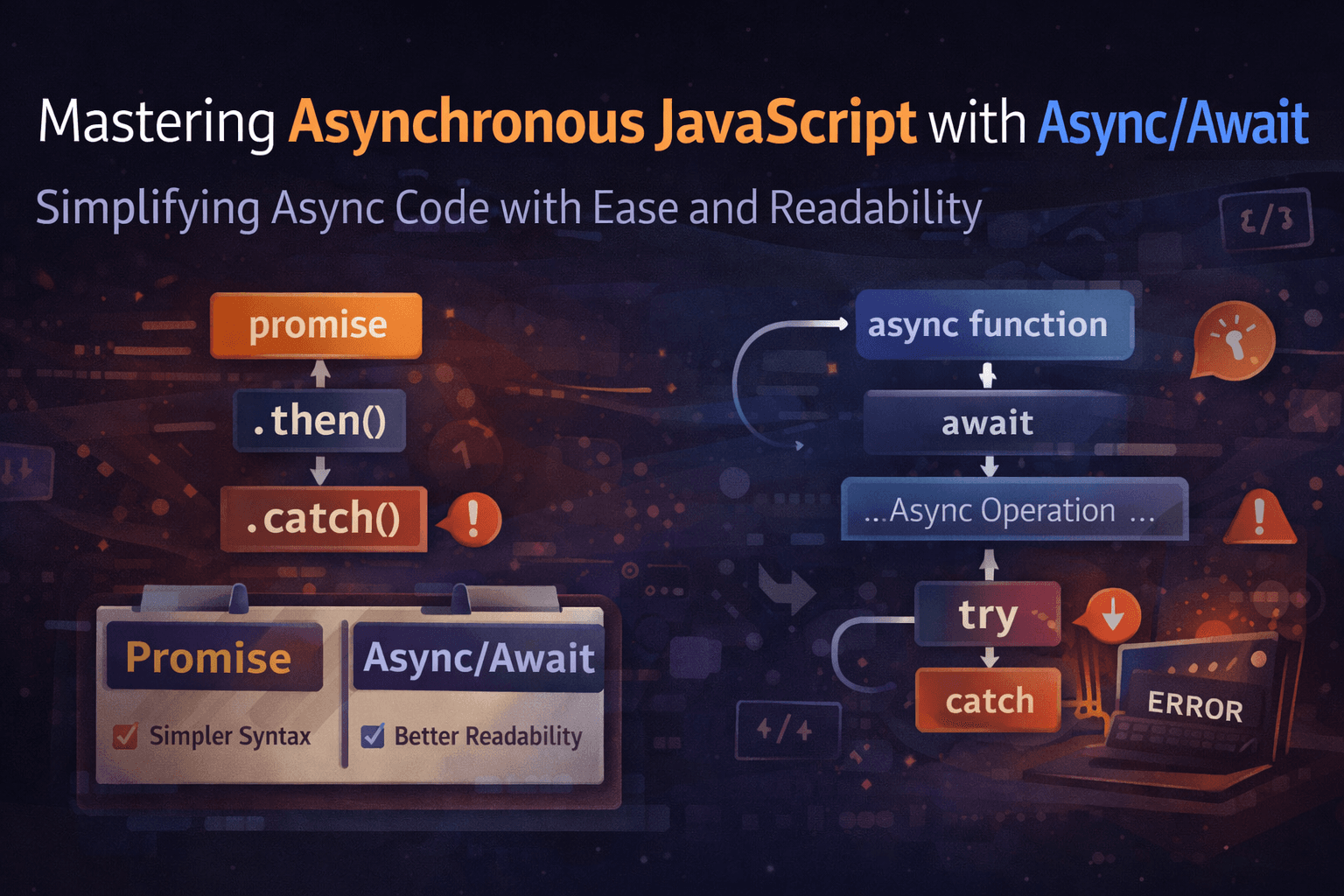

Even with jitter and PER, a cold cache miss can still happen — especially for keys that were never cached to begin with. When a miss occurs, you face a race: multiple concurrent requests all discover the miss and all rush to recompute simultaneously.

Mutex locking solves this with absolute authority: when a cache miss occurs, only ONE request is allowed to recompute and refill the cache. All other concurrent requests either wait at the lock, or are served stale data if available.

Mutex Locking Flow — Nick Fury's Door

300M requests arrive → Cache MISS on 'match_score_key'

─────────────────────────────────────────────────────────────

Request #1: Acquires Lock → Computes → Fills Cache → Releases Lock ✅

Request #2: Waits at lock .......................→ Cache HIT ✅

Request #3: Waits at lock .......................→ Cache HIT ✅

Request #4: Waits at lock .......................→ Cache HIT ✅

... 299,999,996 others → all served from cache ✅

DB receives: 1 query (not 300 million) 🎯

⚠️ Critical Warning

The lock MUST have its own TTL. If the computing request crashes before releasing, you've replaced a stampede with a permanent deadlock. Even Nick Fury can lock himself in his own vault. Use Redis SETNX with an expiry: SET lock_key 1 EX 10 NX.

The pattern: try cache → on miss, try SETNX distributed lock → if acquired, compute and fill → release. If not acquired, retry after a brief sleep, or serve stale data. Simple. Ruthlessly effective.

HERO #04 — THE WATCHER

Stale-While-Revalidate:

The Time-Keeper

Power: Serves instantly, revalidates in the background, never blocks

Stale-While-Revalidate (SWR) is one of the most elegant patterns in distributed systems. When a cache entry expires, instead of blocking while you recompute — serve the stale data immediately, and simultaneously trigger a background refresh.

The user gets an instant response. The next request gets fresher data. The database is never stampeded.

Stale-While-Revalidate Flow

Request arrives at T+301s (entry expired at T+300s)

─────────────────────────────────────────────────────────────

Step 1: Cache MISS (expired) → Serve STALE data immediately ← User sees in 1ms

Step 2: Background worker triggered → Queries DB for fresh data

Step 3: Cache updated with fresh result

Step 4: Next request at T+302s → Cache HIT with fresh data ✅

User Latency: ~1ms | DB Load: 1 query per expiry window

CDNs like Cloudflare and Fastly make SWR a first-class feature. When you see Cache-Control: max-age=300, stale-while-revalidate=60 in HTTP headers — that's exactly this. JioHotstar uses SWR for player images, team logos, match schedules, and semi-dynamic commentary feeds.

⚡ The Tradeoff

The first user after expiry always gets slightly stale data. For match scores, a 1-second lag is totally fine. For a payment confirmation? Absolutely not. Know your tolerance before deploying SWR.

HERO #05 — BLACK WIDOW

Cache Warming: The Scout

Power: Prepares the battlefield long before the battle begins

Cache Warming is the proactive hero — it fills your cache before traffic arrives, so the first real user never experiences a cold miss. No waiting. No recomputation. No stampede. The data is already there.

For JioHotstar, cache warming before the T20 Final means: automated scripts start loading the cache from 6:00 PM — two hours before kickoff. Match metadata, team rosters, player statistics, streaming endpoints, ad configurations — all warmed up in priority order before Rohit Sharma walks to the toss.

Cache Warming — Pre-Match Timeline

6:00 PM ──── Tier 1: Team rosters, player stats (slow-changing)

6:20 PM ──── Tier 2: Match schedule, venue, umpires

6:40 PM ──── Tier 3: Streaming URLs, CDN edge configs

6:55 PM ──── Tier 4: Live scorecard, toss result, XI

─────────────────────────────────────────────────────────

7:00 PM → MATCH STARTS → 300M requests → 99% Cache HIT ✅

Without warming: 7:00 PM → 300M requests → 99% Cache MISS 💥

Netflix runs cache warming scripts for hours before every major release. Before a big series drop, content metadata, thumbnails, recommendation lists, and user preference caches are pre-loaded across every edge node globally.

Amazon and Flipkart warm caches hours before flash sales — product pages, pricing, inventory counts, recommendation carousels — so the first sale-hunter gets a sub-100ms response, not a cold cache miss.

the avengers table

ISSUE #06

Tradeoffs: Freshness, Latency & Consistency

No single strategy wins on all three dimensions. The art is in knowing which levers matter most for your specific use case.

| Strategy | Freshness | Latency | Consistency | Best For |

|---|---|---|---|---|

| Basic TTL | Medium | Low | Medium | Static, low-traffic data |

| TTL Jitter | Medium | Low | Medium | Always — apply everywhere |

| Probabilistic (PER) | High | Low | High | Hot keys, live scoreboards |

| Mutex Locking | High | Medium | Very High | Financial data, auth tokens |

| Stale-While-Revalidate | Lower | Very Low | Lower | CDN, UI configs, media |

| Cache Warming | High | Very Low | High | Predictable traffic spikes |

real world

ISSUE #07

Real-World Playbooks

JioHotstar

Jitter on all match data. Warming from 6 PM. SWR for commentary. Mutex for streaming token generation. PER for live scorecards. All five heroes deployed together.

Netflix

Pre-warms content metadata globally 6 hours before drops. SWR for recommendation caches. Jitter on personalization TTLs to prevent global coordinated expiry.

Amazon / Flipkart

Cache warming 2 hours before flash sales. Mutex on inventory write-through. Jitter on price caches. SWR for non-critical UI. Prevents stampedes at sale open.

CDN Providers

Cloudflare and Fastly implement SWR natively via Cache-Control headers. Edge nodes use probabilistic early refresh for hot assets close to TTL boundary.

mission briefing

ISSUE #08

When to Use Which Strategy

Think of assembling your Avengers team based on the mission type:

Always deploy TTL Jitter — It's your base shield. Zero cost, 80–90% spike reduction. There is no valid reason to skip it on any distributed cache.

Add Cache Warming when you can predict spikes — product launches, match kickoffs, election nights, Black Friday. If you know traffic is coming, prepare for it.

Use SWR for high-read, slight-lag-tolerant data — CDN assets, UI configs, recommendation lists, news feeds. Never for financial or inventory data.

Use Mutex Locking when stale means wrong — financial totals, inventory counts, auth tokens, payment state. Correctness beats latency here.

Use Probabilistic Early Expiration for hot keys under sustained high read load — live scoreboards, trending feeds, viral content, real-time leaderboards.

The Avengers

of Backend Engineering

TTL Jitter · Cache Warming · SWR · Mutex · Probabilistic Expiry

Together, they protect your system from the Thundering Herd.

They'll make sure 300 million fans watch every boundary, every six,

every wicket of India vs New Zealand — without a single dropped frame.